Software Engineer, Borderpass (2025-26)

Built AI & automation tools for legaltech workflows.

Borderpass is a legaltech startup that streamlines immigration pathways for individuals coming to Canada. I contributed to several core features and products since joining the team in May 2025.

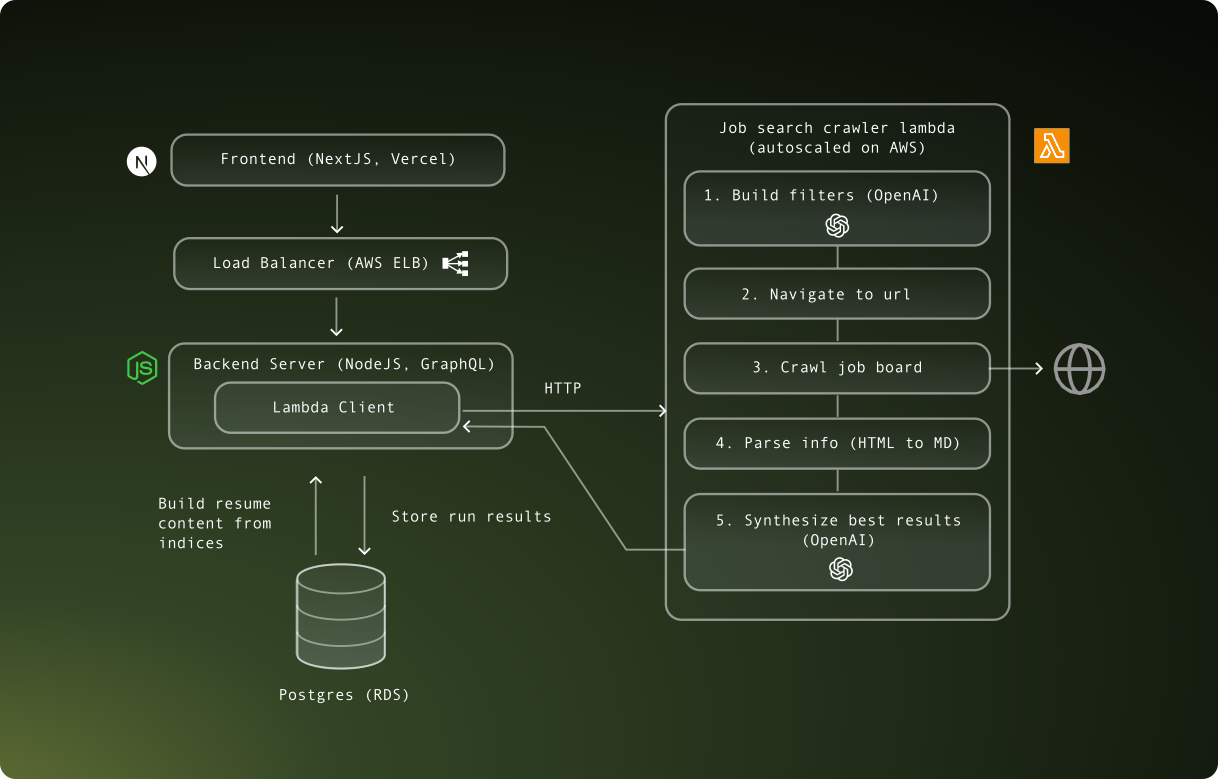

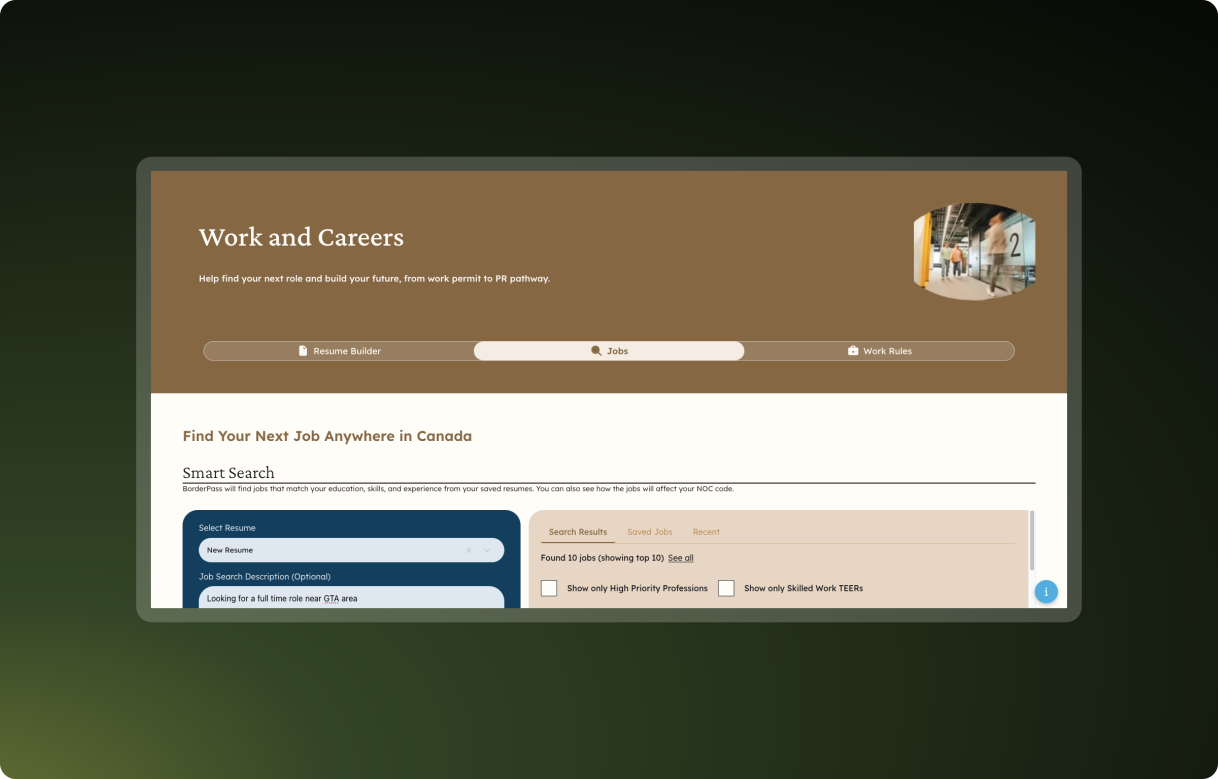

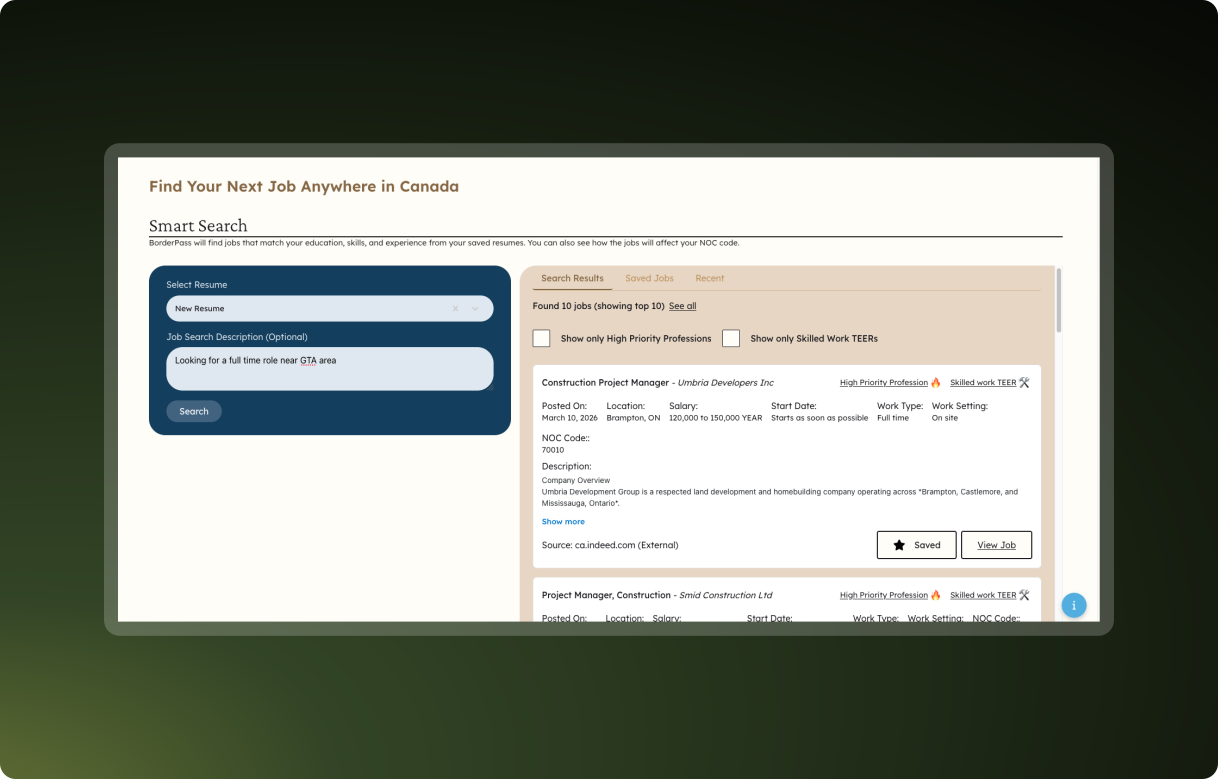

A major project I led was a personalized job search tool that leverages document context (stored as vector embeddings) to generate personalized search filters for a given job seeker. It then uses Scrapy (a Python-based web crawler) to crawl popular job sites, returning a custom list of job postings in seconds.

The Scrapy crawler is hosted on an AWS Lambda split into staging and prod environments.

{

"resumeContent": "ResumeContentSection[]",

"description": "string"

}{

"keywords": ["Dancer", "Singer", "Musician"],

"province": "ON",

"employment_conditions": ["Day", "Night", "Weekend"],

"hours_of_work": ["Full time"],

"salary": "60,000+",

"work_location": ["On site", "Hybrid"],

"education_or_training": ["College or apprenticeship"],

"years_of_experience": ["1 year to less than 3 years"]

}https://www.jobbank.gc.ca/jobsearch/job_search_advanced.xhtml?fn21=21211&fper=F&fwcl=D&term=data+scientist&sort=M&fprov=ON&fskl=%C2%AC100000&fskl=%C2%AC100001&fskl=%C2%AC15141

fper=F filters by full-time jobs only.fwcl=D filters by salary range.term=data+scientist filters by keyword search.fprov=ON filters by province.fskl=... filters by work locations like onsite, remote, hybrid.{

"job_title": "Lead Data Scientist, AI and Technology Strategy",

"employer_name": "RBC Dominion Securities",

"job_description": "...",

"location": "Ottawa, ON",

"work_setting": "On-site",

"salary": "79,000 to 119,000 annually",

"work_type": "Permanent Employment Full-time",

"start_date": "As soon as possible",

"job_source": "Indeed.com",

"link": "https://ca.indeed.com/viewjob?jk=2ba329de2f81eb23",

"is_external": true

}

Users can also save favorite results and view search history.

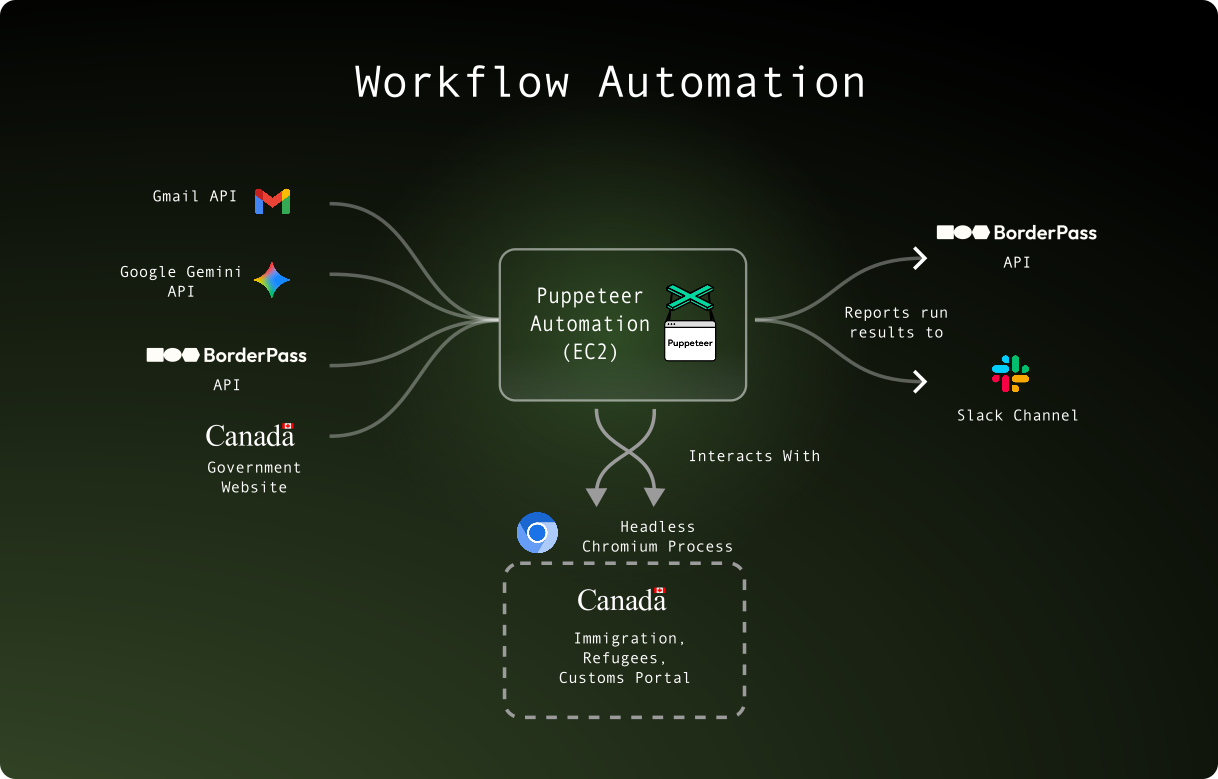

An ongoing project I contributed to was a headless browser automation tool built with Python, Puppeteer, and Google Gemini. It mixes traditional browser-based automation with improvisational capabilities of LLMs to perform repetitive online form submissions and reduce manual labor.

The main challenge was balancing several moving pieces. The automation must follow a strict sequence of steps that are logged and reported by the server and integrations such as Slack. It can read and parse emails, upload and download files, and perform complex form submissions in a headless browser.

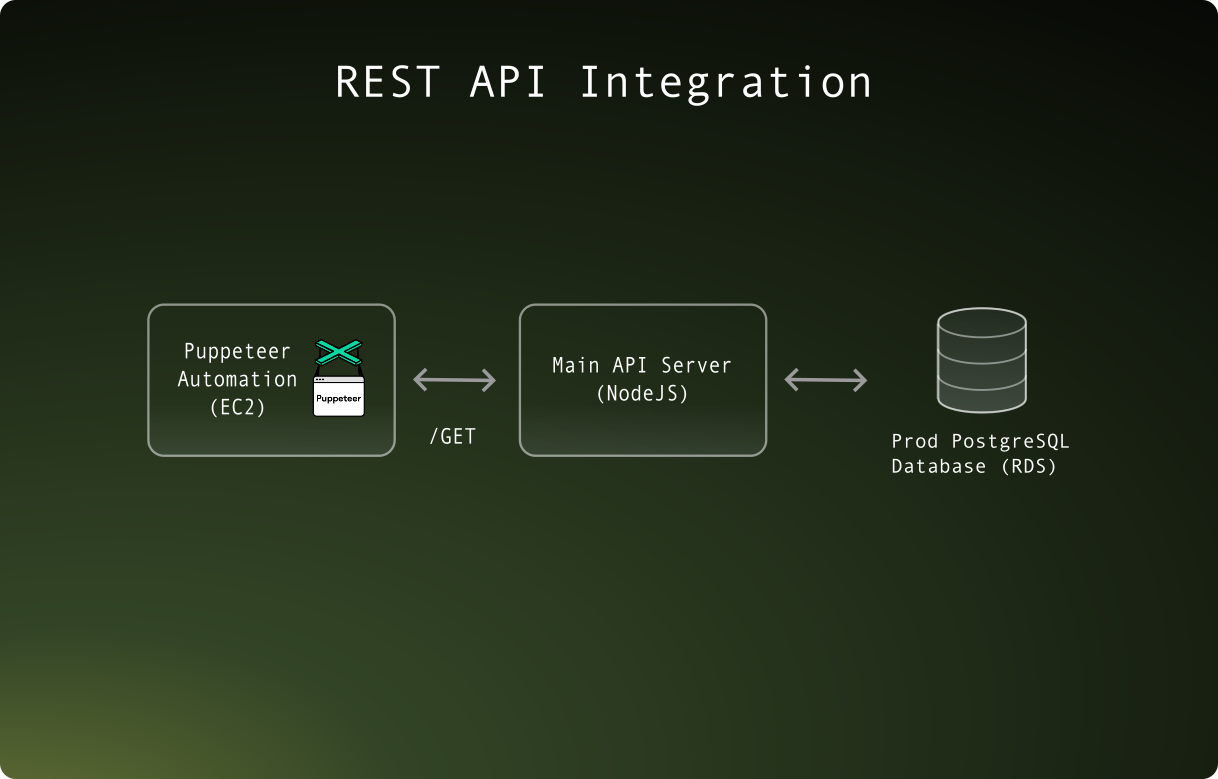

The automation is deployed on an EC2 instance with a configurable cron schedule to perform daily runs.

I helped improve reliability by resolving bot detection issues, improving memory usage, and managing async workflows to ensure tasks like email verification code retrieval finish before proceeding.

A core architectural problem with the automation was that it was split from the main application codebase. This meant that complex business logic needed to be translated from TypeScript to Python when adding decision flows.

A major refactor I did was introducing a REST API interface allowing the automation to communicate with the main API server, which offloaded business logic decisions to the existing backend. This significantly improved maintainability and reduced the risk of business-logic drift.